Finished reading? Continue your journey in Tech with these hand-picked guides and tutorials.

Boost your workflow with our browser-based tools

Share your expertise with our readers. TrueSolvers accepts in-depth, independently researched articles on technology, AI, and software development from qualified contributors.

TrueSolvers is an independent technology publisher with a professional editorial team. Every article is independently researched, sourced from primary documentation, and cross-checked before publication.

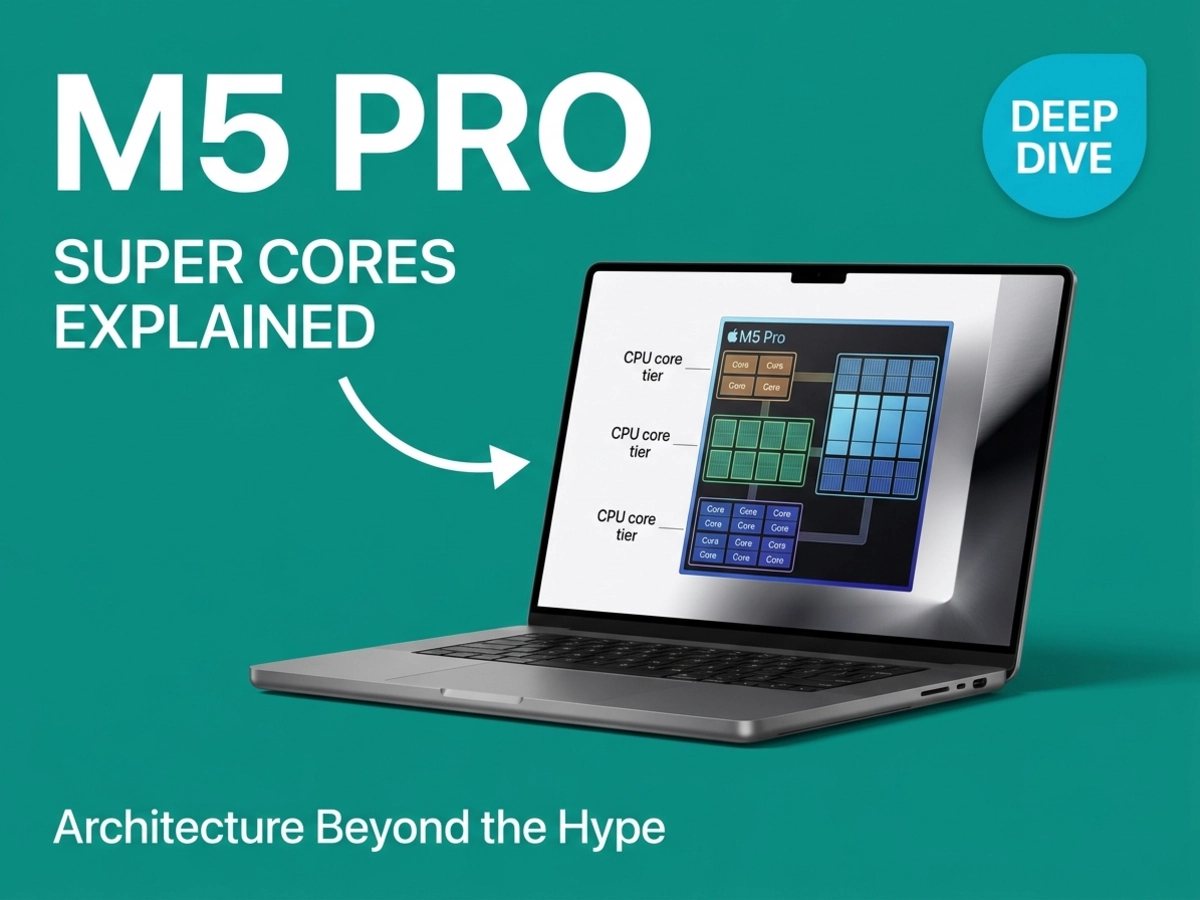

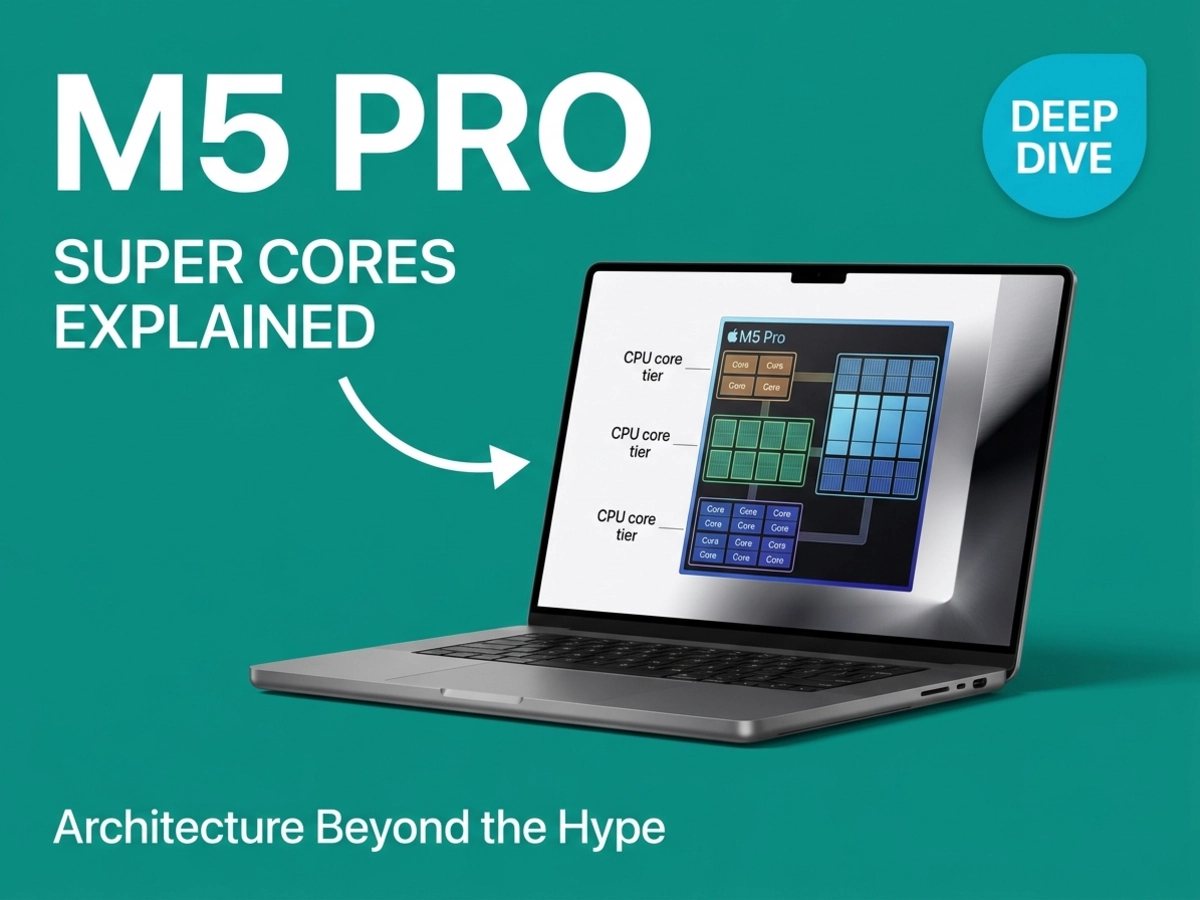

Apple's M5 Pro and M5 Max introduced a naming system for CPU cores that confused nearly every reviewer who covered the announcement. The chips that shipped in March 2026 arrived with three core types, two of which share labels with cores from previous generations while meaning something entirely different. That confusion is worth resolving, but it also points toward something more significant: a structural architectural shift that makes the "super core" debate almost beside the point.

To understand the M5 Pro and M5 Max, start by accepting that Apple's core naming is genuinely confusing, and that the confusion has a specific cause.

From the M1 through the M4 generation, every Apple Silicon chip used the same vocabulary: performance cores handled demanding tasks, efficiency cores handled background work. The M5 generation broke that convention in two directions simultaneously. The old performance cores were elevated and renamed "super cores." A brand-new design took the vacated "performance core" label. The efficiency cores kept their name, but only in the base M5 chip, because the M5 Pro and M5 Max eliminated them entirely.

The result is a vocabulary collision. When you see "performance cores" in M4 documentation and "performance cores" in M5 Pro documentation, you are looking at two different CPU designs wearing the same name tag. Macworld described this clearly: the M5 Pro and M5 Max carry only 6 super cores, significantly fewer than the 10 performance cores found in the M4 Pro, even though the two generations use the same label for their respective primary core designs.

Apple spent years in briefings defending efficiency cores to reviewers who treated them as a weakness. The efficiency core label implied a compromise, a core you used when you could not afford to run the real cores. The new "performance core" designation for the upgraded middle tier is Apple's direct response to that perception, and the renaming carries a specific strategic logic: the new design earned the title upgrade. These are not the efficiency cores that were in previous MacBook Pros.

NotebookCheck's hardware testing confirmed the clock speed differential between the two new core types: super cores reach up to 4.608 GHz, while the new performance cores operate at up to 4.38 GHz. The gap is real, and it reflects a deliberate design choice rather than a manufacturing constraint.

The base M5 MacBook Pro uses a familiar two-tier structure: 4 super cores for demanding tasks and 6 efficiency cores for background work, totaling a 10-core CPU. The M5 Pro and M5 Max depart from that model entirely. The M5 Pro ships in two configurations, a 15-core option with 5 super cores and 10 performance cores, and an 18-core option with 6 super cores and 12 performance cores. The M5 Max uses the 18-core layout exclusively, with 6 super cores and 12 performance cores.

Neither M5 Pro nor M5 Max includes any efficiency cores. Apple's reasoning, relayed in its briefings, is that modern performance cores running at low voltages are capable of handling background workloads without needing a dedicated efficiency-optimized design. This mirrors an approach Qualcomm also took with its Oryon architecture. The question is whether Apple's new "performance cores" live up to that premise or simply paper over the gap.

The M5 spec pages describe a three-tier CPU hierarchy that maps directly onto three distinct workload categories: burst single-thread work (super cores), sustained parallel throughput at high efficiency (new performance cores), and low-power background processing (efficiency cores, base M5 only). The new performance cores sit between the previous two tiers in both clock speed and power draw, explicitly designed for sustained multithreaded scaling rather than peak burst performance.

Apple's official announcement claimed up to 30% faster multithreaded performance for the M5 Pro versus the M4 Pro. That is a meaningful generational jump. The architectural basis for it matters, though, because Architosh documented that the TSMC N3P manufacturing process delivers only approximately 4% improvement in transistor density over the previous node. The performance gain came from architectural changes, not from shrinking the transistors.

The honest caveat here is that Apple has not released detailed microarchitectural documentation for the new performance cores. The company described them in briefings as "derived from the super core architecture" and "optimized for power-efficient multithreaded performance for pro workloads." Jason Snell, who attended Apple briefings, reported that Apple confirmed the new cores are an all-new design rather than a renamed efficiency core. The absence of independent microarchitectural analysis means the full extent of the redesign remains unconfirmed; the strongest available evidence is Apple's own characterization and the clock speed differential NotebookCheck measured.

From the M1 through the M4, every Apple Silicon Pro and Max chip was a single piece of silicon: one die containing the CPU, GPU, Neural Engine, and memory controllers. That design worked extremely well for five generations. It also had a physical ceiling: past a certain die area, manufacturing yield rates decline steeply and the cost per working chip climbs with them.

The M5 Pro and M5 Max break from that pattern. They use what Apple calls Fusion Architecture, combining two separate third-generation 3-nanometer dies using high-bandwidth, low-latency advanced packaging. This makes the M5 Pro and M5 Max the first Apple Silicon chips outside the Ultra line to use a multi-die design.

The engineering challenge Apple had to solve was memory unification. AMD's chiplet architecture and Intel's multi-die packaging both require developers to manage data placement across discrete memory pools, because the CPU and GPU sit on separate dies with separate memory allocations. Apple's implementation maintains a single shared memory pool across both dies, meaning the CPU, GPU, and Neural Engine all access the same memory without any software-level management. The developer experience is identical to previous generations.

The most consequential aspect of Fusion Architecture is what it preserves rather than what it changes: a single unified memory pool across both dies.

Technology.org's analysis documented the specific die arrangement: the CPU die is identical across both the M5 Pro and M5 Max. It houses the 18-core CPU, the Neural Engine, and all system controllers. The GPU die varies by model, 20 cores for the Pro and 40 cores for the Max. The M5 Max, in this configuration, is architecturally a CPU die paired with a larger GPU die rather than a fundamentally different chip.

This also means the GPU-side memory bandwidth scales with the GPU die. The M5 Max with a 40-core GPU reaches 614 GB/s of memory bandwidth. The M5 Pro reaches 307 GB/s. The M4 Pro, for reference, delivered 273 GB/s.

The chiplet packaging market is approximately $40 billion annually, with nearly all data-center AI products built on this approach, as Om Malik's essay citing Ars Technica's analysis reported. Apple has not confirmed details about future chip configurations. But once Apple proved that inter-die unified memory works at the Pro and Max tier, the question for future chips shifts from "how large a single die can we build?" to "how many dies can we connect, and in which directions?" The evidence strongly suggests this is Apple's template for future chip scaling rather than a one-time implementation choice. The M5 Ultra's construction, and every subsequent Apple Silicon scale-up product, will be answering that question.

The CPU narrative around M5 Pro and M5 Max is a naming controversy. The GPU narrative is an architectural change, and it received less coverage largely because there was no naming drama attached to it.

The M5 Pro ships with up to a 20-core GPU. The M5 Max ships with up to a 40-core GPU. Those numbers are identical to the M4 generation. What changed is inside each core. Every GPU core in the M5 family now contains a dedicated Neural Accelerator, a hardware unit designed specifically for matrix multiplication, the mathematical operation at the core of modern AI inference. Previous generations ran AI workloads through the same shader units that handled graphics rendering, meaning AI tasks and rendering tasks competed for the same resources. Neural Accelerators run in a dedicated parallel pipeline.

CPU gains from M4 to M5 are evolutionary. The GPU architecture change is genuinely structural. The benchmark data reflects that distinction clearly.

Apple's official announcement claimed over 4x peak GPU compute for AI tasks compared to the previous generation, along with 4x faster LLM prompt processing than the M4 Pro and M4 Max. Early synthetic benchmarks showed more modest gains, closer to 20% in Geekbench AI scores. NotebookCheck's CPU analysis reported, concluding that benchmark tools are not yet optimized to leverage Neural Accelerators, a common pattern with new hardware-dedicated compute units that requires application-level support to fully utilize.

9to5Mac's review compilation documented Geekbench 6 scores from early M5 Max reviews: a single-core score of 4,338 and a multi-core score of 29,430. The single-core lead reflects the super core architecture's IPC (instructions per clock) advantage, driven by what Apple described as increased front-end bandwidth, a new cache hierarchy, and enhanced branch prediction. NotebookCheck's hardware testing measured CPU gains from M4 to M5 Max at roughly 11-12% in Cinebench single-core and approximately 12-15% in multi-threaded workloads, consistent with Apple's own stated figures for the Max tier. Those gains are real but modest.

The GPU AI compute story, for users whose work involves running local LLMs, AI image generation, or CoreML-based tools, represents a more substantial change in capability.

The coverage distribution does not match the architectural significance distribution. CPU naming consumed the majority of launch coverage. Neural Accelerators, which represent a hardware-dedicated AI compute layer with no equivalent in any previous Apple Silicon Mac chip, received comparatively limited attention. For most professional users upgrading from M4 Pro or M4 Max, the CPU gains will be visible but unremarkable. The workflows that will feel genuinely different are the ones that put AI inference on the GPU.

The upgrade case for M5 Pro and M5 Max is not uniform across the installed base. The M5 Pro and M5 Max are genuinely compelling for one group and a hard sell for another.

Apple's announcement cited up to 2.5x higher multithreaded performance versus M1 Pro and M1 Max. That figure aligns with the cumulative CPU architecture improvements across four chip generations. For M1 or M2 Pro and Max owners, the case is straightforward: multithreaded performance has more than doubled, memory bandwidth has increased substantially, and the neural processing capabilities of the M5 generation are architecturally different from anything in the M1 or M2 era. If performance-bound workloads are the reason for upgrading, the M5 Pro or M5 Max will make a visible difference.

The AI argument is even stronger for this group. M1 and M2 machines lack the Neural Accelerators in the GPU, and their older Neural Engine designs are significantly slower for modern inference tasks. Apple's broader platform maturity across iOS, iPadOS, and macOS is also accelerating the shift away from older architectures iOS 26.4 Beta signals the formal end of Intel app support, making older Apple Silicon machines the practical minimum for forward-looking software compatibility. Local LLM users, Stable Diffusion workflows, and CoreML-heavy applications will experience a materially different machine.

The math is narrower here. CPU gains from M4 Max to M5 Max measure at approximately 12-15%. Multi-core improvements from M4 Pro to M5 Pro are larger, around 23-30%, reflecting the benefit of the new 12-core performance tier in sustained parallel workloads. Early reviews documented that one M5 Pro review unit was 23% faster in multi-core testing than the reviewer's own M4 Max, though that comparison reflects a specific test methodology rather than a general rule.

For M4 Pro or M4 Max owners who upgraded within the last year, the CPU gains alone are unlikely to justify a new machine. The meaningful question is whether Neural Accelerators change the AI workload picture enough to matter for a specific workflow. For video editors relying on DaVinci Resolve effects, animators using AI-assisted tools, or developers running local inference models daily, the GPU compute change is real. For users whose work is primarily code compilation, document editing, or conventional video export, the gains will be marginal relative to what they already have.

No. The super core naming applies exclusively to the M5 family. Apple confirmed this at the time of the M5 announcement: M1 through M4 chips will retain their existing core labels. Performance cores on an M4 Pro remain performance cores. Efficiency cores remain efficiency cores. MacOS Tahoe 26.3.1 updated the labeling for M5-family chips only, primarily because the base M5 shipped in late 2025 with performance cores before the new nomenclature was announced alongside the M5 Pro and M5 Max in March 2026.

Apple eliminated efficiency cores from both the M5 Pro and M5 Max. The base M5 chip retains efficiency cores, but the Pro and Max variants replaced them entirely with the new performance core design. Apple's reasoning is that the new performance cores are capable of operating at sufficiently low power states to handle background workloads without needing a dedicated efficiency-optimized design. Qualcomm pursued a similar philosophy with its Oryon architecture. Whether this tradeoff holds in sustained background-heavy workloads is something reviewers will continue monitoring.

This is an open question. Previous Ultra chips used a straightforward formula: bond two Max dies together using Apple's UltraFusion interconnect. That approach worked because the Max die contained both CPU and GPU on a single piece of silicon, and doubling those dies doubled everything proportionally.

The M5 Pro and M5 Max use separate CPU and GPU dies, which means the "two Max dies" formula no longer maps cleanly. Apple has not confirmed any M5 Ultra configuration or timeline, which limits how far this analysis can go. The evidence strongly suggests, though Apple has not confirmed, that Apple may use a more complex multi-die arrangement for the M5 Ultra, potentially combining multiple GPU dies with CPU dies in a configuration that would have been impossible under the previous single-die Max design. Om Malik's analysis, citing Ars Technica's reporting on multi-die packaging architecture, found that Apple now has significantly more flexibility in how it scales from Max to Ultra than any previous generation allowed.

Neural Accelerators in every GPU core most directly benefit workloads that rely on matrix multiplication at the GPU level. In practical terms, the clearest beneficiaries are local large language model inference (running models like Llama locally), AI image generation tools, CoreML-based features in creative applications, and AI-accelerated effects in DaVinci Resolve. Apple's official announcement cited 4x faster LLM prompt processing compared to M4 Pro and M4 Max for these workloads specifically.

Conventional workflows that do not route significant computation through GPU-based AI inference, such as traditional video encoding, code compilation, or spreadsheet work, will experience only the CPU and memory bandwidth improvements. Those gains are real but in the 12-30% range depending on the specific workload and starting generation, not the larger multiples Apple cited for AI-specific tasks.

Testing claims cited from Apple's official announcement were conducted by Apple in February 2026 using preproduction systems. Independent benchmark results may vary based on hardware configuration, test methodology, and software optimization levels.