Finished reading? Continue your journey in Tech with these hand-picked guides and tutorials.

Boost your workflow with our browser-based tools

Share your expertise with our readers. TrueSolvers accepts in-depth, independently researched articles on technology, AI, and software development from qualified contributors.

TrueSolvers is an independent technology publisher with a professional editorial team. Every article is independently researched, sourced from primary documentation, and cross-checked before publication.

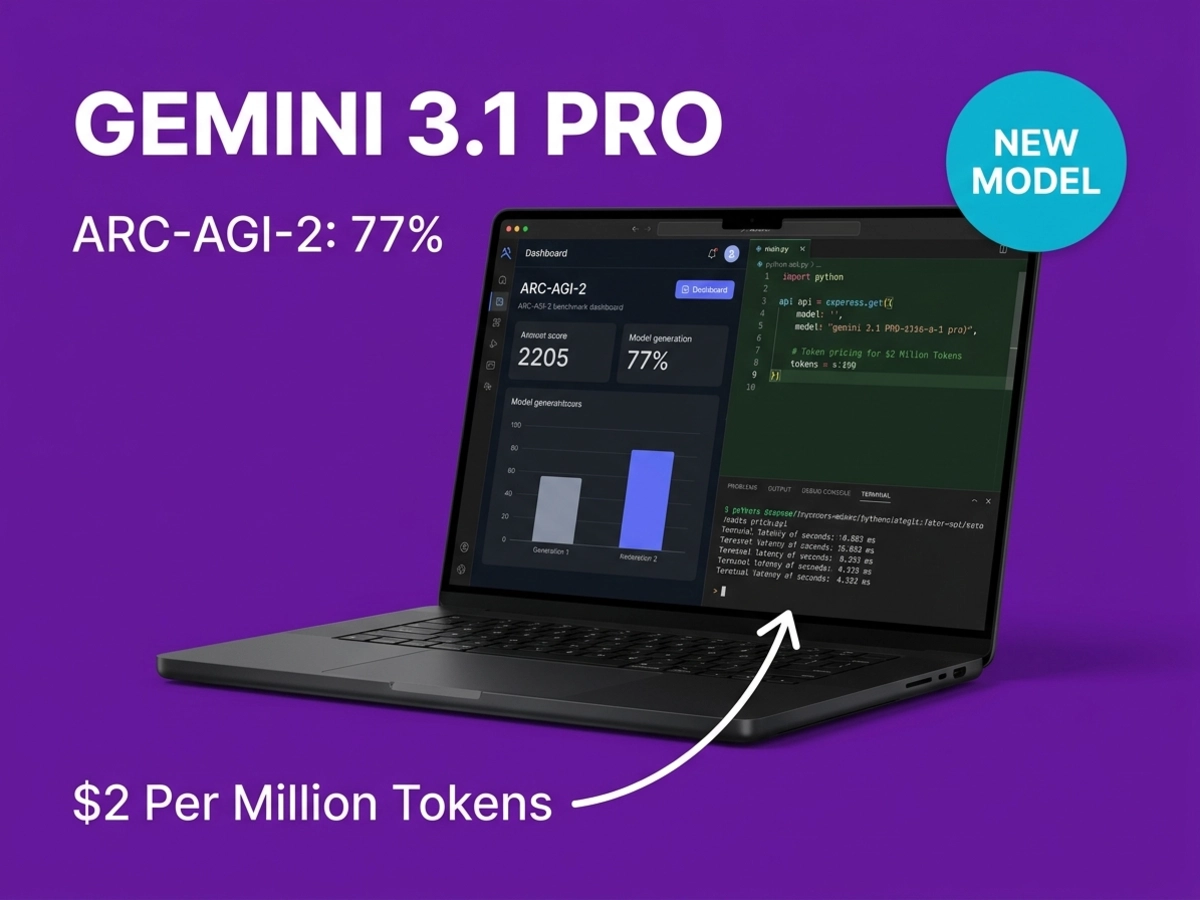

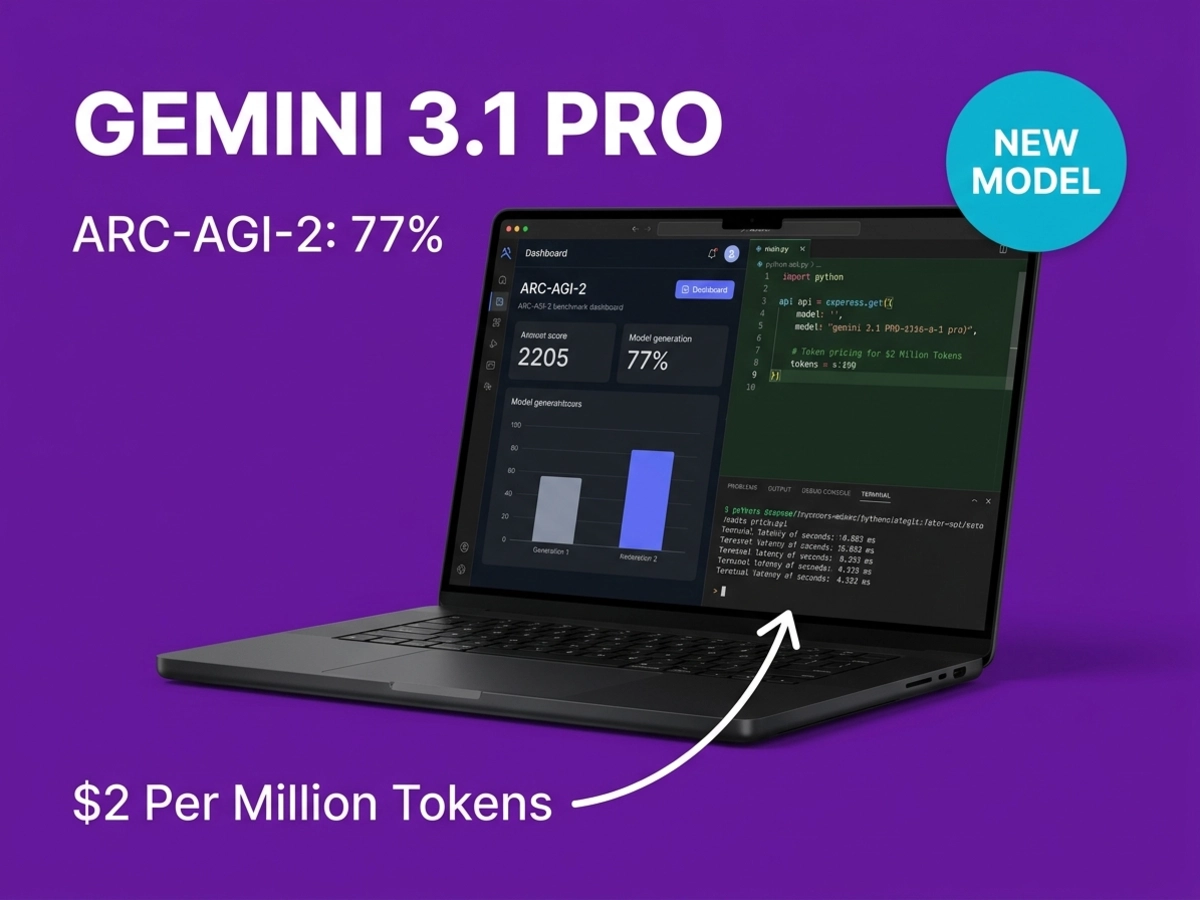

Google released Gemini 3.1 Pro on February 19, marking the company's first 0.1 increment rather than the typical 0.5 update cycle. This model targets abstract reasoning capabilities specifically, achieving 77.1% on ARC-AGI-2 compared to the predecessor's 31.1%. That performance gap matters because ARC-AGI-2 measures how models handle completely novel logic patterns they've never encountered, and the benchmark was deliberately designed to resist brute-force scaling approaches. At two dollars per million input tokens and twelve dollars per million output, the model costs 2.5 times less than Claude Opus 4.6 on input and roughly twice as much less on output, while leading on most benchmarks, creating significant value for developers evaluating production deployments.

To understand what Gemini 3.1 Pro's score represents, it helps to understand what ARC-AGI-2 was built to defeat. The benchmark was designed from the ground up to expose the gap that raw scale and memorization cannot close. Where conventional benchmarks reward pattern matching across training data, ARC-AGI-2 presents visual grid transformation puzzles that require inferring novel rules from a handful of examples, then applying those rules to scenarios the model has never encountered. Every task in the set was validated against human testing with over 400 participants, who averaged 60% under controlled conditions, and every task was confirmed solvable by at least two humans in fewer than two attempts. Pure language models without reasoning systems score 0% on this benchmark. The puzzles specifically target symbolic interpretation, compositional reasoning, and contextually gated rules, which are exactly the capabilities that additional training data does not easily purchase.

When the ARC Prize Foundation released ARC-AGI-2 in March 2025, every frontier model crashed on contact. OpenAI's o1-pro scored 1% at $200 per task. o3-mini-high scored 0%. Claude 3.7 scored 0%. Gemini 2.0 Flash reached 1.3%. The benchmark had achieved its design goal: it separated human-like fluid intelligence from pattern-matching at scale. Starting with that baseline, the ARC Prize Foundation also introduced an efficiency metric alongside raw accuracy, requiring that systems report cost per task as well as score, recognizing that genuine intelligence includes resource efficiency, not just problem-solving rate.

Gemini 3.1 Pro's 77.1% on the semi-private task set is ARC Prize Verified, placing it above the human average and well ahead of Claude Opus 4.6 at 68.8% and GPT-5.2 at 52.9% on the same evaluation. The predecessor, Gemini 3 Pro, reached 45% in Deep Think extended thinking mode; 3.1 Pro achieves 77.1% at standard API inference. That distinction matters for production use: the higher score is not gated behind expensive extended reasoning, it reflects the base model's improved capacity to generalize.

Every frontier model scored at or near 0% when ARC-AGI-2 launched in March 2025. In under twelve months, the leading models advanced to 77% on a benchmark specifically engineered to block the techniques that drove previous AI progress curves. That trajectory cannot be explained by memorization or benchmark saturation. It reflects a real shift in how the leading models handle novel rule inference, and Gemini 3.1 Pro currently sits at the frontier of that capability among models available via standard API inference.

The ARC-AGI-2 headline is part of a broader picture. Gemini 3.1 Pro leads on a wide set of evaluations, but the pattern of where it leads is instructive for matching the model to actual workloads.

Graduate-level scientific knowledge, as measured by GPQA Diamond, reached 94.3%, which DataCamp's benchmark analysis documented as the highest score ever recorded on that evaluation. GPQA Diamond tests physics, chemistry, and biology knowledge at doctoral levels, covering problems that require genuine domain reasoning rather than lookup. Combined with a 1-million-token context window, the model can process entire research papers, cross-reference multi-document findings, and maintain coherent analysis across lengthy scientific literature without splitting content.

Coding capabilities show equally strong results. On LiveCodeBench Pro, Gemini 3.1 Pro scored 2887 Elo, roughly 21% ahead of GPT-5.2's 2393 Elo on competitive programming tasks. Real-world engineering work, measured through SWE-Bench Verified on actual GitHub issues from production codebases, reached 80.6%. Google ran this evaluation averaged across 10 separate attempts and disclosed that three bugs in the official SWE-Bench harness had made certain items impossible to pass for any model; the published scores reflect a corrected baseline.

Agentic capability also improved significantly from Gemini 3 Pro. Performance on APEX-Agents, which measures multi-step autonomous task completion, reached 33.5%, a 15.1-point improvement over the predecessor. Tool coordination on MCP Atlas reached 69.2%, and web research tasks on BrowseComp reached 85.9%, an improvement of 26.7 points, reflecting substantially better performance on tasks requiring multi-source synthesis under real browsing conditions.

Gemini 3.1 Pro leads on abstract reasoning, scientific knowledge, competitive coding, and autonomous workflows across the February 2026 benchmark disclosures. Those are broad capability categories that cover the majority of developer use cases. The model leads on 12 of 18 tracked benchmarks in the comparative set. Where it does not lead is narrower but consequential for specific buyer profiles, which the next section covers honestly.

Not every benchmark story favors Gemini 3.1 Pro, and the limitations cluster around a specific type of work.

GDPval-AA measures performance on economically valuable expert tasks: financial modeling, legal synthesis, business strategy, and professional research reports. These are the tasks where human practitioners with deep domain expertise evaluate output quality, not just technical correctness. On this benchmark, Claude Sonnet 4.6 leads with 1633 Elo versus Gemini 3.1 Pro's 1317 Elo, a 316-point gap. Claude Opus 4.6 scored 1606 on the same evaluation. A gap of this size on a benchmark validated against real-world economic value is not statistical noise. Independent analysis from Artificial Analysis' Intelligence Index confirmed Gemini 3.1 Pro improved on GDPval-AA but did not close the Claude lead.

There is also a small regression on multimodal understanding. Gemini 3 Pro scored 81.0% on MMMU-Pro, which evaluates complex visual reasoning across documents, charts, and images. Gemini 3.1 Pro scores 80.5% on the same evaluation. The difference is modest, but it runs counter to the upgrade narrative and is worth noting for teams whose workflows depend heavily on complex document understanding.

Terminal-based coding presents a third gap. GPT-5.3-Codex leads Terminal-Bench 2.0 at 77.3% versus Gemini 3.1 Pro's 68.5% using the standard Terminus-2 harness. On SWE-Bench Pro (Public), GPT-5.3-Codex edges ahead at 56.8% versus 54.2%. One complication in reading Terminal-Bench results: Google did not publish Gemini's performance using GPT-5.3-Codex's custom Codex harness, making the comparison harness-asymmetric. The gap likely reflects real optimization for shell-native development workflows in OpenAI's specialized model.

These three limitations define a fairly specific buyer profile for whom Gemini 3.1 Pro is not the straightforward upgrade: teams running expert knowledge synthesis workflows at scale, teams with complex multimodal document pipelines that depend on the MMMU-Pro class of understanding, and developers working primarily in terminal-heavy environments. For everyone else, the benchmark leadership is broad enough to make Gemini 3.1 Pro the default choice at its price point. The most decision-relevant question is not "which model leads on the most benchmarks" but "which model leads on the benchmarks that map to my actual production tasks."

The 7.5x cost advantage cited across much of the coverage is mathematically accurate but traces to Opus 4.1, the previous Claude generation, priced at $15 input and $75 output per million tokens. Claude Opus 4.6, the current generation, is priced at $5 input and $25 output per million tokens. Gemini 3.1 Pro is priced at $2 input and $12 output per million tokens. The accurate 2026 comparison is a 2.5x advantage on input tokens and approximately a 2.1x advantage on output tokens over Opus 4.6. That advantage is still meaningful, particularly at high token volumes, but the actual numbers matter for budget modeling.

The pricing is identical to Gemini 3 Pro, which means organizations already using that model can upgrade without any cost impact. The only required change is a model ID update in the API call.

Context caching reduces effective input costs by up to 75% for applications that process repeated contexts, such as systems that load the same codebase, documentation set, or knowledge base across multiple requests. The Batch API halves all token costs for asynchronous workloads that do not require real-time responses. A high-volume deployment processing one billion tokens monthly could see effective per-token costs drop substantially below the headline rates with both optimizations active.

Long-context pricing creates one nuance worth factoring in. Above the 200,000-token threshold, Gemini's price doubles to $4 input and $18 output per million. Claude Sonnet 4.6, priced at $3 input and $15 output per million at standard context, becomes comparably priced for long-context workloads. For applications regularly processing inputs above 200,000 tokens, the choice between Gemini 3.1 Pro and Sonnet 4.6 narrows considerably on a cost basis, shifting the decision toward which model performs better on the specific long-context tasks in question.

The full context window extends to 1 million tokens with an output capacity of 64,000 tokens, providing five times the context capacity of competing models that cap at 200,000 tokens. For tasks requiring analysis of entire codebases, book-length documents, or extended video transcripts, that capacity advantage is independent of the per-token pricing comparison.

Gemini 3.1 Pro launched on February 19 across the Gemini API via AI Studio and Antigravity, Vertex AI for enterprise deployments, Gemini Enterprise, Gemini CLI, Android Studio, and the Gemini app with higher usage limits for Google AI Pro and Ultra plan subscribers. NotebookLM access is limited to Pro and Ultra subscribers. The model ID for API access is gemini-3.1-pro-preview. Through Google's partnership with Apple, these capabilities are also accessible to iPhone users alongside Android, making Gemini 3.1 Pro one of the few frontier models with meaningful native reach across both mobile ecosystems. Developers currently using Gemini 3 Pro should note that gemini-3-pro-preview is scheduled for deprecation on March 26, 2026, making the migration timeline concrete.

The most practically significant addition in Gemini 3.1 Pro for developers is the thinking_level parameter, and the most important detail about it is a default behavior that is easy to miss. According to Google's API documentation, Gemini 3.1 Pro defaults to HIGH if no thinking_level is specified in the request. The four available levels are low, medium, high, and max. The medium tier is new in 3.1 Pro; Gemini 3 Pro only offered low and high. That means any developer migrating from 3 Pro and sending requests without an explicit thinking_level will silently be running every query at maximum reasoning depth, with the associated latency and cost implications.

For most production workloads, medium is the appropriate default. Tasks involving code generation, content synthesis, moderate data analysis, and standard API workflows benefit from medium-level reasoning at lower latency than high or max. Reserve high for tasks that genuinely require multi-step synthesis across complex constraints, and max for the most demanding reasoning challenges. The thinking_level parameter cannot be combined with thinking_budget from the Gemini 2.5 series in the same API call; attempting this returns a 400 error. Temperature should remain at the default value of 1.0 when thinking is enabled, as lower values can cause repetitive looping on complex reasoning tasks.

Multi-turn function calling requires returning thought signatures in each subsequent message. Omitting them also generates a 400 error. For teams building agentic pipelines that chain multiple API calls, this is worth verifying in test environments before production deployment.

Gemini 3 Pro gave developers two thinking options: low and high. Low was often insufficient for complex tasks; high was overkill and expensive for standard workloads. The medium tier closes that gap, and it should be the explicit default in any production configuration that does not require the full depth of high reasoning.

Preview status means the general availability designation has not yet been assigned. Google explicitly positioned this release for gathering feedback on ambitious agentic workflows before removing the preview label. For production-critical deployments, validating on a representative sample of actual workloads before full commitment remains the appropriate path, particularly for complex multi-step autonomous tasks where reasoning failures can cascade.

Does the 1-million-token context window cost the same as standard context?

No. Gemini 3.1 Pro's standard pricing of $2 input and $12 output per million tokens applies to contexts up to 200,000 tokens. Above that threshold, pricing doubles to $4 input and $18 output per million. The 1-million-token capacity is available, but the per-token cost for using it is meaningfully higher.

When will Gemini 3.1 Pro move from preview to general availability?

Google has not published a specific GA date. Preview status signals that the company is gathering production feedback before finalizing the release. Gemini 3 Pro (gemini-3-pro-preview) is being deprecated March 26, 2026, which creates urgency for migration independent of the 3.1 Pro GA timeline.

Should developers on Gemini 3 Pro migrate now?

For most use cases, yes. The benchmark improvements are substantial, the pricing is identical, and the migration requires only a model ID change. The main migration task is explicitly configuring thinking_level to medium rather than accepting the default HIGH behavior. Teams should also verify multi-turn function calling implementations return thought signatures correctly before moving production traffic.

What multimodal inputs does Gemini 3.1 Pro accept?

The model supports text, images, audio, video, and complete code repositories as native inputs. This native multimodal processing eliminates preprocessing steps required for models that handle only text, which simplifies workflows for applications handling mixed media content.

Is Gemini 3.1 Pro appropriate for expert knowledge work like legal or financial analysis?

It depends on the workload. The GDPval-AA benchmark, which measures performance on economically valuable expert tasks including financial modeling and legal synthesis, shows Claude Sonnet 4.6 and Opus 4.6 leading by a substantial margin. For applications where output quality on nuanced professional knowledge work is the primary criterion, the Claude models retain a measurable advantage at current pricing. For reasoning-intensive tasks, scientific analysis, coding, and high-volume production workloads, Gemini 3.1 Pro leads on both benchmark performance and cost efficiency.