|

|

|

|

TrueSolvers is an independent technology publisher with a professional editorial team. Every article is independently researched, sourced from primary documentation, and cross-checked before publication.

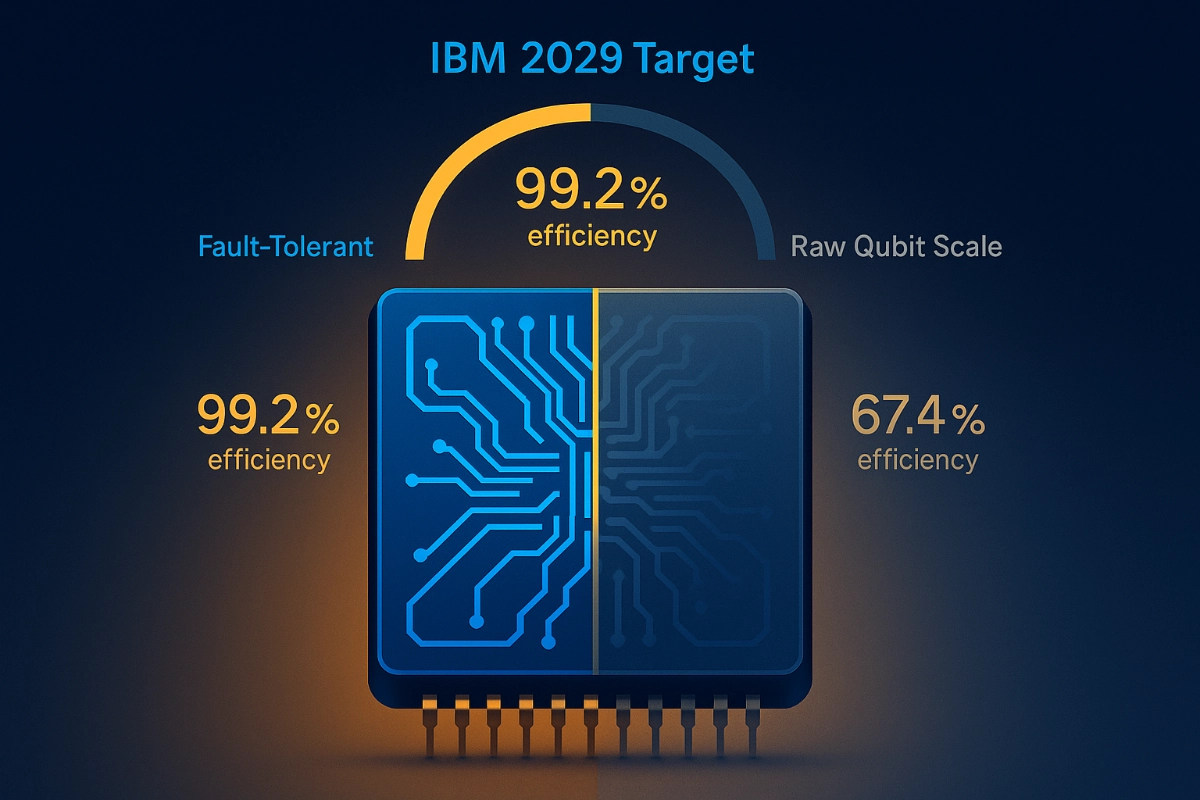

IBM claims fault-tolerant quantum computers by 2029, but raw qubit counts hide critical hardware constraints. New data reveals error correction efficiency not scale determines real progress. For enterprise planners, this means today’s quantum investments must prioritize architecture over headline numbers.

Most coverage of IBM's quantum roadmap leads with a number. The Condor processor hit 1,121 qubits in December 2023. Nighthawk, launched in November 2025, has 120. That apparent regression looks alarming until you understand why Condor was largely useless for real computation and why Nighthawk matters far more than its smaller qubit count suggests.

Condor was a fabrication achievement, not a computational one. Its qubit count scaled up, but the architecture lacked the connectivity, the error correction infrastructure, and the circuit depth needed to perform meaningful computation. IBM acknowledged this directly: Condor's value was learning how to build at scale, not learning how to compute at scale. Eagle, IBM's 127-qubit processor from 2021, was considered more practically valuable because its error rate ran five times lower than Condor's. Fewer qubits, better computation.

Nighthawk continues this pattern, and the specification that matters is not qubit count. The IBM Newsroom's November 2025 announcement documented Nighthawk at 120 qubits with 218 tunable couplers and a capacity for 5,000 two-qubit gates, a 30% improvement in circuit complexity over the prior Heron generation at comparable error rates. Future Nighthawk iterations are planned to support 7,500 gates by the end of 2026, 10,000 by 2027, and 15,000 by 2028 with more than 1,000 connected qubits via extended coupler architectures.

After reviewing the specification history across IBM processors from Eagle to Nighthawk, the pattern is consistent enough to state directly: gate depth and coupler density are the metrics that predict algorithmic utility. Physical qubit count is a fabrication headline. Enterprise planners who are still benchmarking quantum hardware primarily by qubit numbers are measuring the wrong variable, and that misalignment will only become more costly as the industry transitions to logical qubit reporting.

The 2029 commitment from IBM is precise: a system called Starling capable of executing 100 million quantum gates across 200 logical qubits. That number, 200 logical qubits, is what matters for enterprise workloads in chemistry, materials modeling, and optimization. Logical qubits are error-corrected, stable, and programmable in ways that physical qubits running without correction cannot be.

The question that determines whether Starling is real or aspirational is how many physical qubits IBM needs to create those 200 logical ones. Under surface codes, the error correction approach that dominated quantum research for two decades, the ratio is approximately 1,000 physical qubits per logical qubit. Starling with 200 logical qubits would require 200,000 physical qubits under surface code assumptions. That does not exist and could not be built by 2029.

IBM's answer is the bivariate bicycle (BB) code family, published in a 2024 paper in Nature. The specific code used in Starling's architecture, called the gross code, encodes 12 logical qubits into 144 data qubits plus 144 syndrome check qubits, 288 physical qubits total. That is roughly 24 physical qubits per logical qubit rather than 1,000, a reduction of more than 90% over surface code overhead. The BB family accomplishes this while maintaining comparable error protection, by exploiting a code structure where each qubit connects to only six others and where all required connections can be routed across just two physical chip layers.

The 10x efficiency claim is not a theoretical upper bound. It reflects a specific, hardware-realizable encoding scheme with known connectivity constraints and a demonstrated decoder. This is not a future software upgrade. It is an architectural prerequisite that determines whether the physical qubit count for Starling is 200,000 or approximately 10,000.

IBM Fellow Matthias Steffen, who leads quantum-processor technology, told Live Science that the exact physical qubit count for Starling is "yet to be finalized," with estimates pointing to roughly 10,000 physical qubits for the full system. That figure, if achievable, makes the 2029 date physically plausible. Surface codes would make it impossible.

For context on trajectory, IBM's Blue Jay system planned for 2033 targets 2,000 logical qubits and one billion gates, also built on this same qLDPC foundation.

IBM's modular roadmap from 2025 to 2029 has four named stages. Understanding what each processor actually tests, and what it doesn't, is essential for reading how credible the 2029 date actually is.

Loon (2025): IBM's November 2025 announcement confirmed that Loon demonstrates all key hardware components needed for fault-tolerant quantum computing. These include c-couplers for long-range qubit connections, qubit reset circuits, and multi-layer chip routing. Critically, Loon does not combine these components into a working logical qubit. It validates that each element can coexist on a single die, at low yield, with known fabrication complications. It is a proof of components, not a proof of integration.

Kookaburra (2026): This is the first processor designed to integrate a quantum error correction memory module with a logical processing unit (LPU) in a single, replaceable hardware block. One Kookaburra module, built around the gross code, creates 12 logical qubits from approximately 144 data qubits. It is the first time IBM will attempt to run an actual error-corrected computation rather than just store encoded information.

Cockatoo (2027): Two Kookaburra-style modules connected via quantum links (L-couplers). Moor Insights' analysis of IBM briefings estimated Cockatoo at roughly 288 physical qubits for 24 logical qubits across two gross code blocks. This stage validates module-to-module quantum entanglement, a prerequisite for Starling to scale.

Starling (2028-2029): Approximately 17 gross code blocks, each paired with an LPU, plus magic state injection circuits for the non-Clifford gate operations required for universal quantum computation. The system targets 200 logical qubits and 100 million gates.

Kookaburra is not one milestone among many. It is the first proof that the entire architecture actually works as an integrated unit. A failure or delay at Kookaburra does not just push back that processor; it delays Cockatoo, which delays Starling. One year stands out as more important than 2029: it is 2026. Enterprise planners asking whether IBM's 2029 date is credible should be watching the Kookaburra delivery closely, specifically whether IBM publicly demonstrates a working logical qubit on a single integrated module, not simply another component validation.

IBM has delivered on each roadmap milestone since launching its public roadmap in 2020. That track record is real, but Kookaburra is a qualitatively different milestone from every prior one. It requires integration, not just fabrication.

Two infrastructure developments underpin IBM's timeline credibility in ways that receive less attention than processor announcements.

The first is manufacturing. IBM has committed all future quantum chip production to its Albany NanoTech Complex, where 300mm wafer fabrication has been operational and producing chips including Nighthawk and Loon. The larger wafers have halved processor build time and enabled a tenfold increase in chip physical complexity, meaning the multi-layer routing and long-range coupler designs that would have required years to iterate at prior scales can now be tested and refined in months. The same tooling used for the most advanced classical semiconductor fabrication is now applied to quantum chips, with the added constraint of maintaining superconducting properties across layers.

The second development is the decoder, and it is the one that arrived unexpectedly early. Fault-tolerant quantum computing requires real-time classical processing to read error syndromes from the quantum chip and feed corrections back before the system degrades. Prior projections assumed this would require co-located high-performance computing hardware, an infrastructure constraint that would have limited where Starling could actually be deployed.

IBM's November 2025 announcement documented achieving real-time qLDPC decoding in under 480 nanoseconds, using a decoder called Relay-BP running on FPGA hardware. The result was a 10x speedup over leading competing approaches and arrived a full year ahead of the scheduled milestone, in a development partnership with AMD. Relay-BP derives from classical belief-propagation algorithms used in telecommunications error correction, adapted for qLDPC code structures.

As recently as 2024, both the fabrication timeline and the decoder deployment model were open risks. This combination, an operational 300mm fab and an FPGA-deployable decoder, resolves both. Starling does not need a supercomputer in the same room to function. That changes its practical deployment profile significantly.

IBM's Jay Gambetta described the current state to Live Science as: the science has been solved. That framing is accurate, but it requires careful interpretation for enterprise planners.

The science that has been solved includes: the theoretical framework for qLDPC fault tolerance (the 2024 Nature paper), the decoder design (Relay-BP, now demonstrated), and the hardware component validation (Loon). These are genuine milestones and they distinguish IBM's 2029 commitment from the kind of vague "quantum in a decade" promises that characterized the industry before 2023.

What has not been solved is integration at scale. Every required component has been shown to function; no integrated logical qubit running full error-corrected computation has yet been demonstrated. Kookaburra in 2026 will be the first attempt.

The primary near-term risk is yield. Loon's design, with its multi-layer routing, long-range c-couplers, and qubit reset circuits, is significantly more complex to fabricate without defects than any prior IBM quantum processor. Complex multi-layer chips are prone to dead qubits and non-functional couplers during production. Even a small number of non-functional elements can compromise an entire chip's utility, forcing redundancy that reduces effective logical qubit count. IBM has explicitly described Loon as a vehicle for resolving these fabrication problems before Kookaburra. The 300mm fab accelerates the learning, but there is no guarantee that yields will reach the threshold needed for Kookaburra-scale integration by 2026.

The tradeoff is real: higher coupler density gives IBM the error-correction topology it needs, but it also increases the probability of defects per chip. More connections mean more failure points, and that tradeoff scales with every step toward Starling's complexity.

Independent technical experts interviewed by TechNewsWorld characterized the remaining challenge as "an engineering scaling exercise, not a research project." That distinction matters: the remaining risks are real but they are the kind of risks that can be managed with sufficient investment and iteration time, not the kind that require new theoretical breakthroughs.

IBM has stated explicitly that quantum advantage work done before 2029 is designed to run seamlessly on the fault-tolerant systems of 2029 and beyond. That architectural continuity is deliberate, but it is not unconditional. For enterprise technology leaders allocating capital across competing deep-tech frontiers, understanding which race you are entering matters as much as the hardware itself; the logic mirrors what is driving investments like Bezos' $6.2B bet on physical-world AI, where choosing the right architectural lane early determines long-term positioning.

The Nighthawk processor uses the same square-lattice qubit topology that underpins Starling's gross code architecture. Algorithms developed for Nighthawk-era hardware, specifically those designed for square-lattice connectivity and circuit depths up to 10,000 two-qubit gates, are being built on the same geometric foundations that Starling will use. This is not accidental. IBM has designed the near-term advantage roadmap as an on-ramp to the fault-tolerant roadmap.

Algorithms that require all-to-all qubit connectivity or that assume arbitrary qubit pairing will face architectural migration when Starling arrives. Algorithms built on superconducting qubit geometry other than square-lattice topologies will also face non-trivial porting work. This distinction is not about performance today; it is about avoiding a 2029 rewrite of work that enterprise teams are building now.

For organizations that need a framework to verify real progress rather than roadmap claims, IBM's Quantum Advantage Tracker defines three independently verifiable challenge categories: observable estimation, variational problems, and classically verifiable tasks. Each category specifies what a quantum system must demonstrate to claim genuine advantage over classical alternatives. These benchmarks are auditable and not self-reported, which makes them a more reliable signal for enterprise procurement decisions than raw hardware announcements.

The concrete guidance for enterprise quantum strategy comes down to three decisions. First, prioritize hardware with high coupler density and square-lattice topology; that is what algorithmic compatibility with Starling requires. Second, treat 2026 as the next meaningful checkpoint, not 2029: Kookaburra's delivery is the most diagnostic near-term signal for whether the fault-tolerance timeline holds. Third, use the Quantum Advantage Tracker categories as evaluation criteria for current quantum investments, rather than relying on qubit count comparisons that will not remain meaningful as the industry transitions to logical qubit reporting.

Q: Does IBM's 2029 Starling promise depend on any unproven science?

IBM's June 2025 roadmap papers document an end-to-end architecture grounded in the 2024 Nature publication on bivariate bicycle codes, a demonstrated real-time decoder (Relay-BP), and hardware component validation through Loon. The science is formally de-risked. What remains unproven is integration: no single IBM processor has yet combined all these components into a working logical qubit. Kookaburra in 2026 is the first integrated test.

Q: What does 200 logical qubits actually mean for enterprise use cases?

Logical qubits are error-corrected and stable long enough to execute deep circuits. IBM's 100 million gate target for Starling represents roughly a 20,000x increase in circuit depth over what current systems can reliably execute. This is the scale where quantum chemistry in drug discovery and materials design, and certain optimization problems, begin to outperform classical simulation. Starling does not solve every enterprise use case, but it makes a meaningful class of chemistry and optimization problems tractable.

Q: How does IBM's approach compare to competitors like Quantinuum or Google?

Google's fault-tolerance approach relies on surface codes, which require roughly 1,000 physical qubits per logical qubit. Google has demonstrated surface code error correction at small scale but has not published a comparable physical qubit reduction strategy to IBM's gross code. Quantinuum uses trapped-ion technology with different error profiles and targets a similar fault-tolerance timescale around 2030. The comparison is not straightforwardly about which is "ahead" because the technologies have different error rates, gate speeds, and connectivity constraints. IBM's specific advantage is the gross code's efficiency, reducing physical qubit requirements by more than 90% relative to surface code equivalents.

Q: Should our organization invest in current IBM quantum systems or wait for Starling?

Current IBM systems, particularly Nighthawk-era hardware, are appropriate for organizations developing algorithms they plan to eventually run at fault-tolerant scale. The square-lattice topology and gate depth constraints of Nighthawk are compatible with Starling's architecture. Organizations should not expect current systems to deliver fault-tolerant computation; they should treat them as development environments for building Starling-compatible algorithms. Waiting until 2029 to begin algorithm development means losing at minimum three years of architectural learning that will be difficult to recover quickly.

Boost your workflow with our browser-based tools

Share your expertise with our readers. TrueSolvers accepts in-depth, independently researched articles on technology, AI, and software development from qualified contributors.

Finished reading? Continue your journey in Tech with these hand-picked guides and tutorials.