Finished reading? Continue your journey in Dev with these hand-picked guides and tutorials.

Boost your workflow with our browser-based tools

Share your expertise with our readers. TrueSolvers accepts in-depth, independently researched articles on technology, AI, and software development from qualified contributors.

TrueSolvers is an independent technology publisher with a professional editorial team. Every article is independently researched, sourced from primary documentation, and cross-checked before publication.

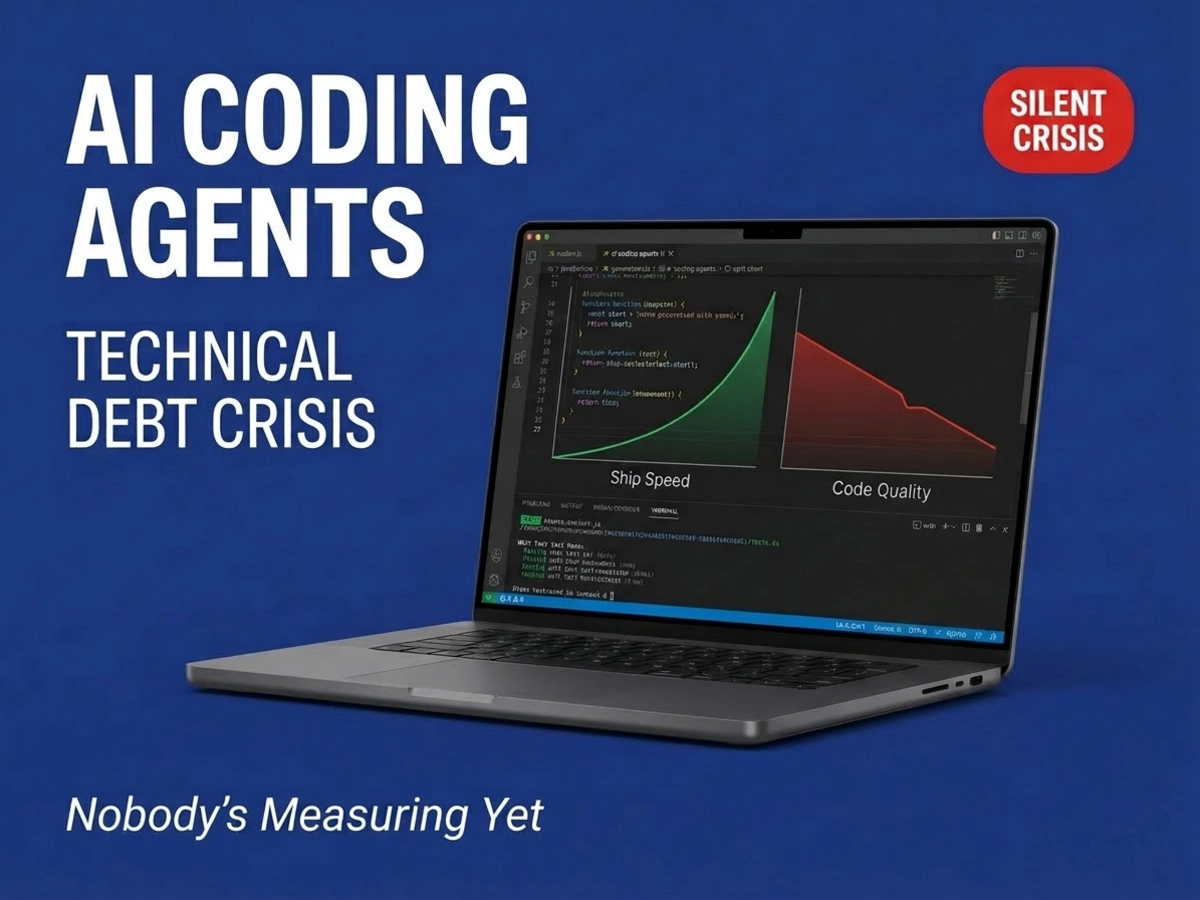

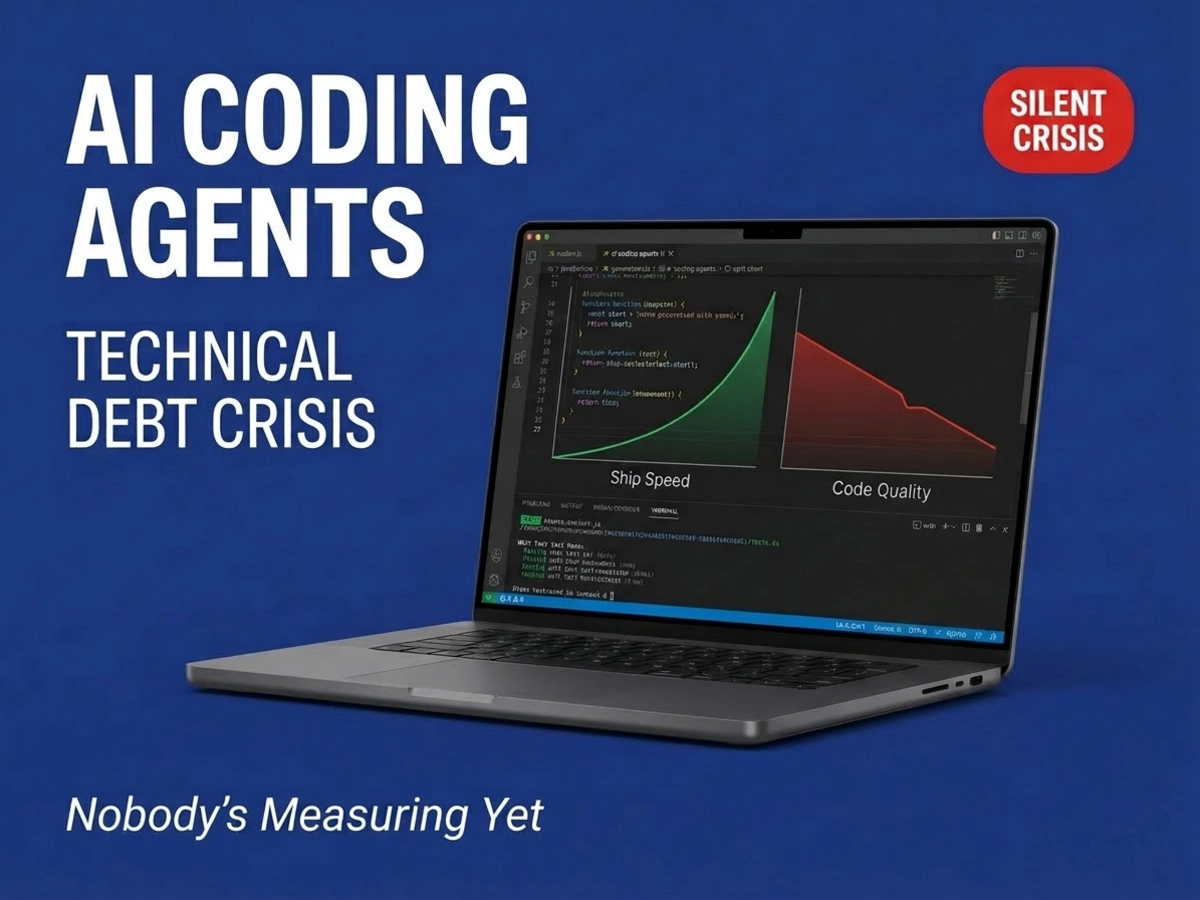

Developers are shipping code faster than ever with AI assistance, but beneath the productivity surge lies a quality crisis that most organizations won't detect until it's catastrophically expensive to fix. While engineering leaders celebrate velocity improvements, a different pattern emerges in the data: code that passes tests today becomes unmaintainable tomorrow.

In the first half of 2025, a research organization called METR ran a randomized controlled trial that produced one of the most uncomfortable findings in AI development research. Sixteen experienced engineers, each a long-term contributor to major open-source repositories, worked through 246 real tasks. Half were given access to state-of-the-art AI coding tools; half worked without them. When the results came in, the AI-assisted developers had taken 19% longer to complete their tasks than their counterparts working manually.

That alone is striking. What makes it disorienting is the perception data alongside it. Before the study, developers predicted AI would speed them up by 24%. After completing the tasks, having just experienced the slowdown firsthand, they still estimated AI had improved their performance by about 20%. The gap between what the stopwatch recorded and what developers believed was happening was nearly 40 percentage points.

This is not a finding about bad tools or inexperienced users. These were veteran contributors to complex, mature codebases, working with leading AI assistants. The METR researchers attribute the slowdown to the cognitive overhead of prompting, reviewing, and correcting AI output on repositories with 10-plus years of architectural history, and caution that the finding is a snapshot of early-2025 conditions on a specific kind of task rather than a universal verdict. The AI suggestions were accepted less than 44% of the time, meaning developers spent significant time in what amounted to a slow editorial process rather than direct construction.

Taken alongside Google's DORA 2024 findings, the result becomes harder to dismiss as a narrow edge case. DORA's survey of roughly 3,000 professionals found that for every 25% increase in AI adoption, delivery stability degraded by an estimated 7.2% and throughput by 1.5%, even as individual developers reported feeling more productive. The tools are pervasive: 75.9% of DORA respondents used AI for at least some daily responsibilities. The system-level returns are declining.

The same divergence appears across all five independent research streams: individual productivity metrics improve or hold steady while aggregate system metrics degrade. This is the core of the measurement problem. Organizations are looking at the right data and drawing the wrong conclusions, not because they are careless, but because the right data and the wrong conclusions point in the same direction.

While surveys and trials capture individual behavior, GitClear's longitudinal analysis of 211 million changed lines of code provides the structural evidence of what AI adoption does to codebases over time. The picture spanning January 2020 through December 2024 documents a consistent directional shift in how engineers interact with existing code.

The most significant metric is the collapse of refactoring activity. Code classified as refactored, representing work that was moved, reorganized, or consolidated, dropped from roughly 25% of changed lines in 2021 to under 10% in 2024, while copy-pasted code grew from 8.3% to 12.3% over the same period. Those two trends are causally connected. When developers use AI to write a function, the tool generates a new function. It does not scan the existing codebase to determine whether equivalent logic already exists and could be called or extended. Duplication becomes the default.

The same GitClear analysis found that lines of newly added code revised or deleted within two weeks of being written reached 7.9% in 2024, more than double the rate recorded in 2020. Added code now approaches 50% of all changes across the repositories studied. The codebase is growing faster than it is being integrated, consolidated, or stabilized.

Every copied block of logic creates a separate future maintenance obligation. A security vulnerability that should require one patch now requires hunting down four or five implementations of the same function scattered across different modules. An architectural change that should touch one interface now requires coordinated changes across duplicated code that was never meant to diverge but has. The compound interest on this debt runs silently for months, then becomes visible all at once when a system-wide change is needed.

The code quality concerns from GitClear's data are significant. The security data from enterprise environments is more urgent.

Apiiro, which analyzed tens of thousands of repositories across Fortune 50 enterprises, documented a fundamental inversion in the error profile of AI-generated code. By June 2025, AI-generated code was introducing over 10,000 new security findings per month across the studied repositories, a roughly 10-fold increase since December 2024. Privilege escalation vulnerabilities rose 322%, architectural design flaws jumped 153%, and exposed secrets increased 40%. The same Apiiro research documented that syntax mistakes fell by 76% and logic bugs dropped by more than 60% in AI-assisted code relative to non-AI output. By the surface measures most review processes are designed to catch, AI-assisted code looks better. The problems hiding beneath that surface are another matter entirely.

Independent testing supports the same conclusion from a different angle. Veracode tested more than 100 language models across Java, JavaScript, Python, and C# and found that AI-generated code contains approximately 2.74 times more vulnerabilities than human-written code, with 45% of tested code samples introducing OWASP Top 10 vulnerabilities. Sonar's CEO noted in MIT Technology Review's December 2025 analysis that as AI models have improved, obvious syntax defects have declined while structural maintenance problems, what software engineers call code smells, now constitute more than 90% of the issues found in AI-generated code.

Standard code review evolved to catch the categories of issues that are now declining: syntax errors, obvious logic flaws, common anti-patterns that trigger linters. The categories that are rising, privilege escalation paths, architectural violations, secrets embedded in generated code, require a different kind of scrutiny. Most review processes are not calibrated for it. AI is producing 3 to 4 times more commits per developer, bundled into fewer but much larger pull requests, which means reviewers face higher volume with reduced granularity. The vulnerabilities that will be most expensive to fix are exactly the ones most likely to slip through the review process as it currently operates.

Technical debt, in the traditional sense, lives in repositories. It shows up in code quality scans, duplication reports, and architecture diagrams. The kind of liability that Anthropic's research describes is harder to locate.

In January 2026, Anthropic published the results of a randomized controlled trial examining how AI coding assistance affects comprehension. Fifty-two junior software engineers were split into two groups to learn an unfamiliar Python library. One group had AI assistance; one coded manually. After completing their tasks, both groups took a comprehension quiz with no AI allowed. The AI-assisted group averaged 50% on the quiz compared to 67% for the manual group, a 17-point gap most pronounced in debugging tasks. Those who used AI primarily as a code generator, delegating implementation without engaging with the reasoning behind it, scored below 40%. Those who used AI as a conceptual tool, asking it to explain approaches and challenge their thinking, scored above 65%.

The productivity difference between the two groups was not statistically significant. AI users finished about two minutes faster on average. The comprehension difference was substantial.

The Anthropic team is careful about the limits of this finding: 52 participants, measured immediately after tasks, using an unfamiliar library. Whether these effects compound over months of production work or dissipate with experience is genuinely uncertain. But the mechanism the study identifies has structural implications. When developers consistently delegate code generation without engaging with what they are delegating, comprehension erodes, and debugging capacity erodes with it.

Researchers and practitioners are beginning to call this category of liability cognitive debt: the accumulated failure of development teams to maintain a shared, working theory of the systems they nominally own. A team's theory of its system is not written in code. It lives in the knowledge of why architectural decisions were made, how components are expected to interact, what the implicit contracts between modules are. When AI generates that code, the decisions it embeds are often opaque to the developers who ship it. This points to a liability that sits outside any repository scan. Teams can accumulate cognitive debt faster than code-level debt, and it surfaces differently: not as gradual slowdown, but as sudden paralysis when a non-trivial change is required.

The five evidence streams above all describe a problem already in progress. The talent pipeline data suggests that the organizational capacity to address it is also declining.

Employment among software developers aged 22 to 25 fell nearly 20% between 2022 and 2025, according to Stanford research cited in MIT Technology Review's December 2025 analysis of AI's impact on the developer workforce. According to the LeadDev 2025 survey as documented by Pixelmojo's analysis of 2026 adoption trends, 54% of engineering leaders plan to reduce junior developer hiring in response to AI productivity efficiencies. Stack Overflow surveys tracked over the same period show trust in AI coding tools dropping from 43% to 29% in 18 months, even as usage climbed to 84%, a gap that reflects the organizational pressure to use tools developers themselves are increasingly skeptical of.

No individual data source makes this risk explicit, but the findings fit together in a way that is difficult to dismiss. The developers who would otherwise spend years building refactoring judgment, debugging intuition, and architectural comprehension through hands-on practice are either being hired less often or being onboarded into environments where AI handles most of the work that would have built those skills. The Anthropic study showed that delegation mode impairs comprehension. If junior developers are primarily learning through delegation rather than through the work itself, the skills needed to oversee, audit, and fix AI-generated systems may not develop at the rate the accumulating debt requires.

The technical debt AI creates today will eventually require human judgment to address. The full impact of this is not yet measurable, but the direction of the component trends is not ambiguous.

The reason this crisis accumulates undetected is not organizational negligence. It is a structural feature of how productivity gains and debt costs are distributed across time.

The gains from AI coding tools are immediate, individual, and attributable. A developer writes a feature in three hours rather than six. The manager sees a 50% time reduction. The AI adoption decision looks justified. The costs are deferred, distributed, and invisible in standard reporting. Six different developers each spend an extra hour debugging a poorly integrated AI-generated module over the following quarter. That cost never appears as a line item associated with the AI tool. It shows up as routine maintenance overhead, absorbed into the team's general velocity without being traced to its source.

The DORA 2024 team introduced the "Vacuum Hypothesis" to describe a related pattern: time savings from AI get reclaimed, but they tend to get absorbed by lower-value tasks rather than reinvested in the architectural hygiene that would contain debt accumulation. Developers freed from writing boilerplate are not, on average, spending that time refactoring or reviewing architecture. They are handling more tickets, responding to more requests, shipping more features.

Developers use AI tools at an 84% rate while trust, as measured in Stack Overflow surveys, has declined to 29%. That is not a contradiction. It is a description of rational actors under organizational pressure: developers know the code they are generating needs more scrutiny, they are simultaneously measured on how quickly they ship, and the protected time for thorough review does not exist. The trust-usage inversion may be the most revealing single data point across all five research streams. The result is predictable. The debt accumulates. The metrics show productivity. Nobody triggers an alarm.

AI-generated technical debt also has a different timing signature than the traditional kind. Traditional debt produces visible deceleration: velocity slows over time, and the team feels it. AI-generated technical debt often holds velocity stable until a structural intervention is required, at which point the cost appears suddenly, in the form of emergency architectural rework, security incidents, or the discovery that a system cannot be modified without cascading failures. By that point, the debt is expensive to address and difficult to attribute.

Not every organization using AI coding agents is heading toward a debt crisis. The distinguishing factor is not which tools they use or how frequently. It is what they measure and what they protect.

The teams doing this well treat three categories of work as protected budget rather than overflow capacity, and the distinction matters because none of these is standard practice today.

Refactoring time is allocated on an explicit schedule, not left to accumulate as a wish-list item. When AI generates net-new code by default, human-led consolidation must be planned and protected or the duplication surge GitClear documented becomes permanent.

Architectural review is separated from syntax review: different reviewers, different criteria, different gates. Standard review is calibrated for the bugs AI is already eliminating. Architectural review is calibrated for the privilege escalation paths, design violations, and structural debt that AI is actively generating at scale.

Comprehension verification is applied to AI-heavy contributions. Contributors are expected to explain the logic of recent AI-assisted commits, not merely confirm that tests pass. This is the direct countermeasure for cognitive debt, and it has no equivalent in conventional review practice.

The divergence between teams managing AI debt well and teams generating it unchecked comes down to one principle: AI tools amplify existing organizational discipline rather than compensating for its absence. Teams with strong architectural practices and genuine code review processes find that AI accelerates their best work. Teams that have substituted velocity metrics for quality standards find that AI accelerates the accumulation of problems those standards would have caught. The tool does not change the organizational culture. It does what the culture directs it to do, faster.

What to measure is more tractable than it may appear. Code churn rates tracked specifically for AI-generated code reveal instability early. Duplication rates compared to pre-AI baselines reveal the consolidation deficit that creates compounding maintenance costs. Deployment error rates attributed by code source reveal whether AI-generated features are reaching production with higher incident rates than human-written ones. The 7.2% delivery stability decrease documented by DORA and the 10x security findings surge documented by Apiiro are not inevitable consequences of AI adoption. They are consequences of AI adoption without measurement discipline.

For engineering teams building governance frameworks around AI coding tools, the wider developer toolchain also warrants attention in 2026. Homebrew 5.0's Gatekeeper enforcement changes, which affect unsigned command-line packages starting in September, create a related category of unplanned disruption for teams that have not audited their dependency stack. The same measurement discipline that surfaces AI-generated technical debt, knowing what is in your codebase and why, applies equally to the tools that build it.

How do we know if we are already accumulating AI-generated technical debt?

The earliest signal is usually code churn in AI-assisted files: are those files being revised or partially deleted within two weeks of being written at higher rates than the rest of the codebase? A second signal is duplication growth: static analysis tools that detect copy-pasted logic blocks will show a rising rate if AI adoption has outpaced architectural review. A third, often overlooked signal is review time: if pull requests from AI-heavy contributors are taking longer to review and generating more back-and-forth, reviewers are detecting problems that were not caught upstream. Track all three against pre-AI baselines rather than against external benchmarks.

Should junior developers be allowed to use AI coding tools?

The risk is not that junior developers use AI. The risk is that anyone, at any experience level, uses AI without engaging with what it generates. The Anthropic research makes the mechanism clear: delegation mode, asking AI to write code and shipping it, impairs comprehension. Tutorial mode, asking AI to explain approaches and following up with questions, can reinforce learning. For junior developers specifically, the governance question is whether the AI usage pattern being encouraged is the one that builds the debugging and comprehension skills they need to develop, or the one that substitutes for developing them. That is a supervision and onboarding design question, not a permission question.

Why does DORA show delivery stability declining when individual developer productivity seems to be improving?

Individual productivity and system delivery are measuring different things. A single developer writing features faster is an individual outcome. Delivery stability, which measures how often deployments succeed and how quickly the system recovers from failures, is a system outcome. AI can improve the former while degrading the latter if the code it generates is harder to integrate, more likely to conflict with existing architecture, or more likely to carry undetected security issues that surface post-deployment. DORA's finding suggests the gains at the individual level are not converting into gains at the system level, which is the level that actually determines whether software reaches users reliably.

What makes AI-generated security vulnerabilities harder to catch than traditional ones?

Traditional security vulnerabilities tend to cluster in predictable categories that linters, security scanners, and experienced reviewers have been trained to catch: hardcoded credentials, injection vulnerabilities, missing input validation. AI-generated code does produce those, but Apiiro and Veracode both document that the faster-growing category is architectural. Privilege escalation paths and design-level flaws require understanding how the component fits into the broader system, what access it should and should not have, and whether the generated code respects those boundaries. That is a human judgment requirement, and it is one that larger, less granular pull requests make harder to exercise.

How do we make the business case for more deliberate AI adoption to leadership focused on velocity?

Start with cost attribution rather than abstract warnings. Pull request rollback rates, hours spent on emergency debugging, and security incident response times are all measurable and have dollar equivalents. The DORA finding, that every 25% increase in AI adoption correlated with a 7.2% decrease in delivery stability, is a board-level number: what does a 7% reduction in deployment reliability cost your organization annually in incidents, customer impact, and engineering time? Frame the governance investment as protection against a known failure mode with documented financial consequences, not as caution in the face of uncertainty. The evidence for the failure mode is now substantial enough that the burden of proof has shifted.